How it works

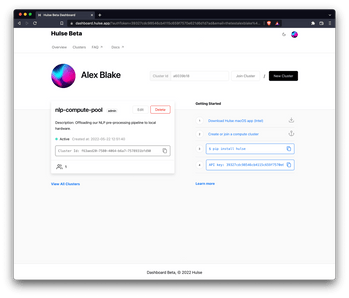

Tap into your existing on-premise hardware to solve your NLP needs.

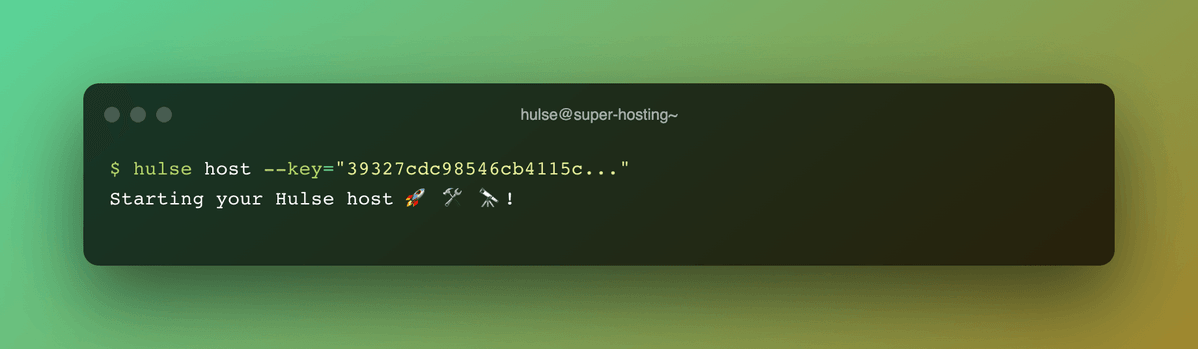

Run your host

Share computing power with the Hulse desktop app (Intel macOS) or using the Hulse CLI.

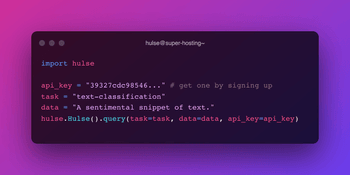

Process data

Run NLP models from the Hugging Face model hub on your cluster with our API and Python client.